Who has the fastest F1 website in 2021? Part 5

This is part 5 in a multi-part series looking at the loading performance of F1 websites. Not interested in F1? It shouldn't matter. This is just a performance review of 10 recently-built/updated sites that have broadly the same goal, but are built by different teams, and have different performance issues.

- Part 1: Methodology & Alpha Tauri

- Part 2: Alfa Romeo

- Part 3: Red Bull

- Part 4: Williams

- ➡️ Part 5: Aston Martin

- Part 6: Ferrari

- Part 7: Haas

- Part 8: McLaren

- Bonus: Google I/O

- …more coming soon…

Aston Martin

- Link

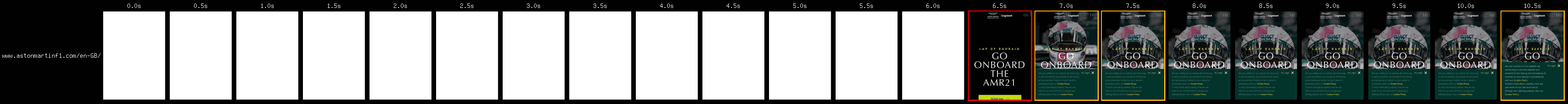

- First run

-

6.2s (raw results)

- Second run

-

2.7s (raw results)

- Total

-

8.9s

- 2019 total

Pretty good! This team was called Racing Point last year (and 2019), and their site was a bit of a performance disaster, but it looks like that's all fixed! In 2020 their car was a direct copy of the Mercedes, earning them the nickname Tracing Point, which is top-quality punnagement.

Cutting the mustard

I was particularly pleased to see this at the bottom of the <body>:

<script nomodule src="https://polyfill.io/v3/polyfill.min.js?…"></script>They use Polyfill.io to bring in the required polyfills. Polyfill.io uses the User-Agent string to decide which polyfills are needed, and as a result the script is empty in modern browsers. To avoid making a request that results in nothing, the Aston Martin team used nomodule to prevent the download in browsers that support JavaScript modules.

This is a loose form of feature-detection that my former BBC colleague Tom Maslen called "Cutting the mustard". Browsers that support modules just so happen to support the rest of the stuff that's required, so nomodule becomes convenient feature-detect.

I'd take this a step further. If your site's core content works without JavaScript, then you can make that the experience for those older browsers. Serve all your JavaScript with type="module" to prevent older browsers running it, and now you can write modern JavaScript without the stress and pain of dealing with those older browsers.

Possible improvements

- 3.5 second delay to content render caused by font foundry CSS. Covered in detail below.

- 1.25 second delay to content render caused by additional CSS on another server. This problem is covered in part 1, and the solution here is just to move the CSS to the same server as the page.

- Paragraph layout shift caused by late-loading fonts which could be performed earlier using some preload tags.

- 0.5 second delay to main image caused by poor image compression.

These delays overlap in some cases.

Key issue: Font foundry CSS

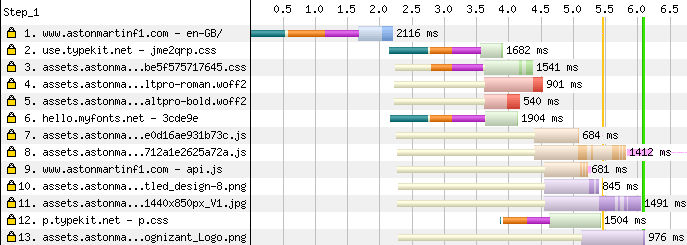

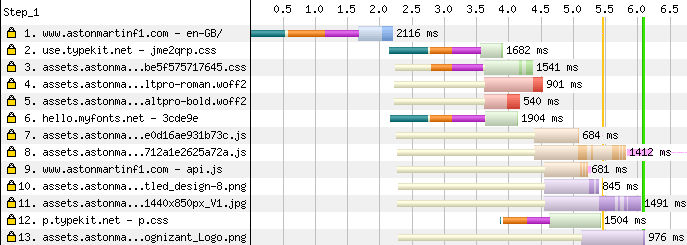

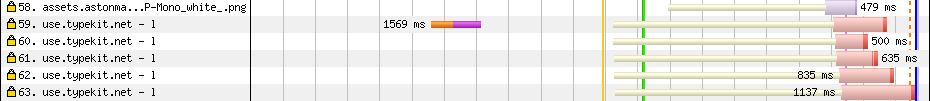

Here's the start of the waterfall:

I see extra connections on rows 2, 3, 6, and 12. The CSS on row 3 belongs to the site, so that should be moved to the same server as the page to avoid that extra connection, as covered in part 1.

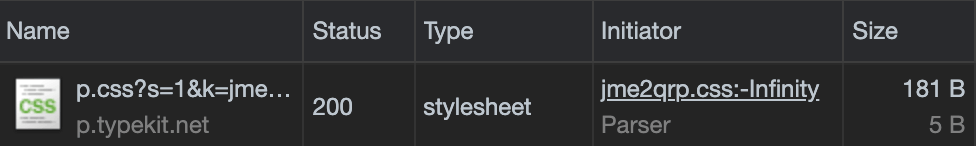

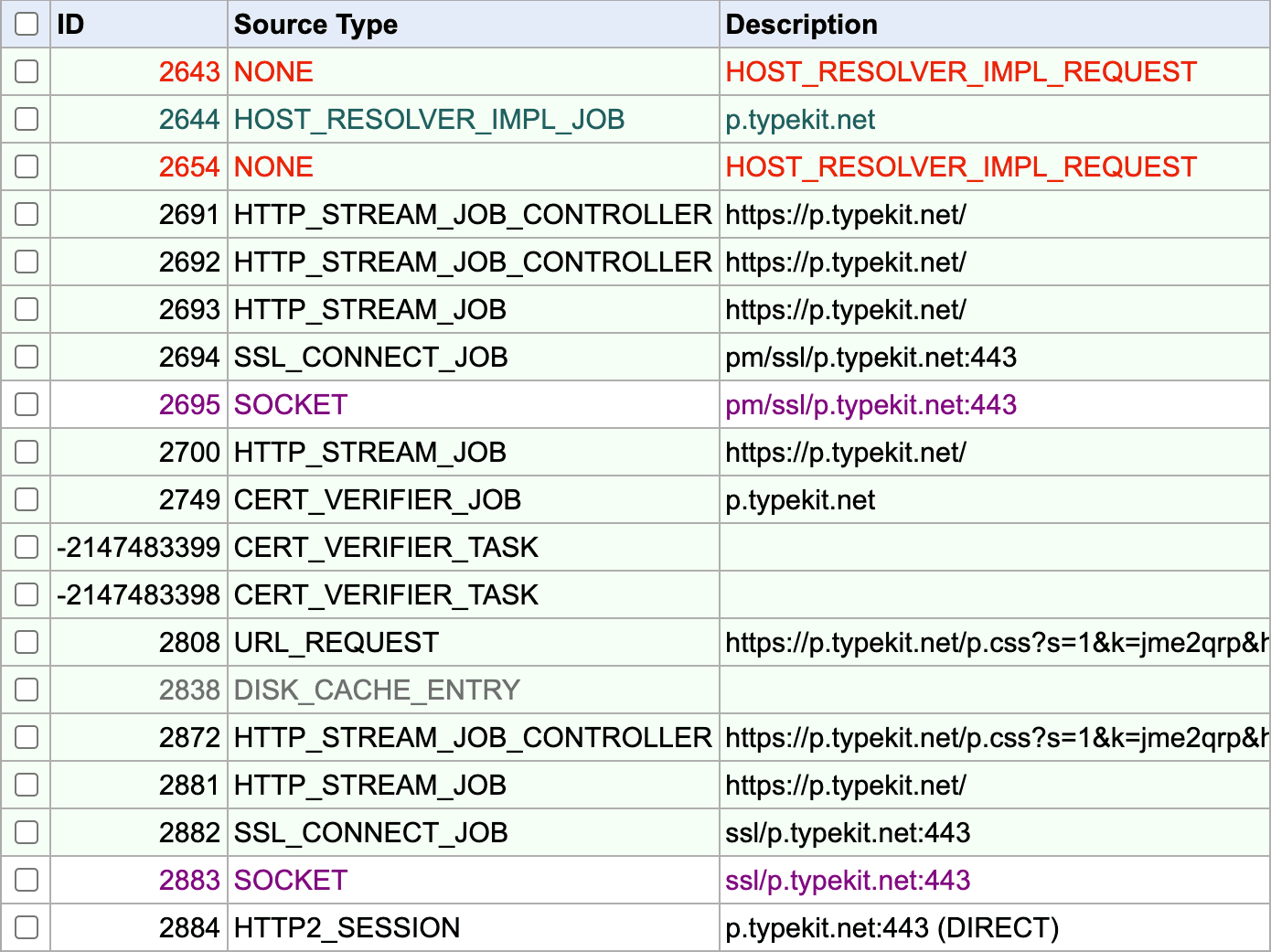

The lateness of 12 suggests it's being initiated by another blocking resource, and Chrome DevTools confirms it:

I'm not sure why it thinks it's on line -Infinity though. Anyway… If this seems familiar, it's because it's the same font foundry tracking issue we saw in part 1, and the best solution is to load this stuff async. But, there are some new details here worth exploring:

The hello.myfonts.net CSS request on row 6 happens in parallel with the CSS on row 3, even though it's the CSS on row 3 that imports the CSS on row 6. This is because the developers have worked hard to limit the damage of those other-server requests:

<link rel="preconnect" href="https://p.typekit.net" crossorigin />

<link rel="preconnect" href="https://use.typekit.net" crossorigin />

<link rel="preload" href="//hello.myfonts.net/count/3cde9e" as="style" />There's a preload that makes the request happen in parallel. This is great to see! It doesn't solve the problem as effectively as loading the font CSS async, but it's still much faster than what we saw on the Alpha Tauri site.

They use preconnect too. Preconnects are great if you don't know the full URL of an important other-site resource, but you do know its origin. In this case, some fonts come from use.typekit.net, and we can see the benefit of the preconnect:

See how the connection phase of the request happens really early on row 59? That's the preconnect in action. A full preload would have been better, but I guess they didn't have a way to know the URLs ahead of time. But wait…

The connection on line 12 happens late. But the <head> contains:

<link rel="preconnect" href="https://p.typekit.net" crossorigin />…so what's going on? Why isn't that pre-connect happening? Well, unfortunately it is, but it isn't used.

Connections and credentials

By default, CORS requests are made without credentials, which means no cookies and other things that directly identify the user. If browsers sent no-credentials requests down the same HTTP connection as credentialed requests, well, the whole thing is pointless. Imagine if, half way through a phone call, you put on a different voice and pretended to be someone else. The other person is unlikely to be fooled, because it's part of the same call. So, for requests to another origin, browsers use different connections for no-credential and credentialed requests.

Which brings us back to:

<link rel="preconnect" href="https://p.typekit.net" crossorigin />

<link rel="preconnect" href="https://use.typekit.net" crossorigin />The crossorigin attribute tells the browser to make a no-credential connection, which is ideal for requests that use CORS. Font requests use CORS, so that 2nd preconnect is doing the right thing. But that first preconnect is to handle:

@import url('https://p.typekit.net/p.css?s=1…');…and @import requests in CSS do not use CORS, it's a fully credentialed request. The pre-connection still happens, but it isn't used. In fact, it's likely taking up bandwidth that could have been used elsewhere.

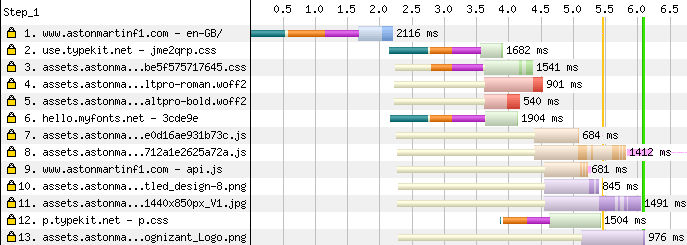

Unfortunately Chrome DevTools doesn't show this extra connection, so I dug into chrome://net-export/ to create a full log of browser network activity. This records all browser network activity, so I started a new instance of Chrome so I wasn't capturing too much noise from other tabs. Here are the key results:

This is pretty low-level stuff, so don't worry if it doesn't make sense, there's a lot of it I don't understand. But, what I can see is row 2691, which is a request to set up a connection to p.typekit.net, which results in the socket in row 2695. The pm (privacy-mode) code in the connection identifies this as a no-credentials connection. This is the preconnect.

Then, in row 2872 we get the actual request for the CSS resource, and sadness, we get another socket in row 2883. This connection doesn't have the pm code, because it's for credentialed requests.

The solution here is simple; just remove the crossorigin attribute from that preconnect. All requests to that particular origin are credentialed, so that works fine. Sometimes you'll need two preconnects for the same origin, one for credentialed fetches, and another for no-credential fetches.

Phew!

Issue: Main image compression

The image compression on the Aston Martin site is mostly very good. Someone clearly took time over it. Unfortunately, one place it isn't so good is the all-important main image of the page:

As usual, the WebP and AVIF have more smoothing, but this image sits underneath text, so you can get away with a lot of compression. Perhaps even more than above.

How fast could it be?

Here's how fast it looks with the first-render unblocked and the image optimised.

- Original

- Optimised

It isn't worlds apart. The Aston Martin site is generally well-built.

Scoreboard

| Score | vs 2019 | |||

|---|---|---|---|---|

Red Bull | 8.6 | -7.2 | Leader | |

Aston Martin | 8.9 | -75.3 | +0.3 | |

Williams | 11.1 | -3.0 | +2.5 | |

Alpha Tauri | 22.1 | +9.3 | +13.5 | |

Alfa Romeo | 23.4 | +3.3 | +14.8 |

Aston Martin slots in just behind Red Bull. It's incredibly close for the lead! But with five teams still to go, can anyone beat Red Bull to the title?

- Part 1: Methodology & Alpha Tauri

- Part 2: Alfa Romeo

- Part 3: Red Bull

- Part 4: Williams

- ➡️ Part 5: Aston Martin

- Part 6: Ferrari

- Part 7: Haas

- Part 8: McLaren

- Bonus: Google I/O

- …more coming soon…